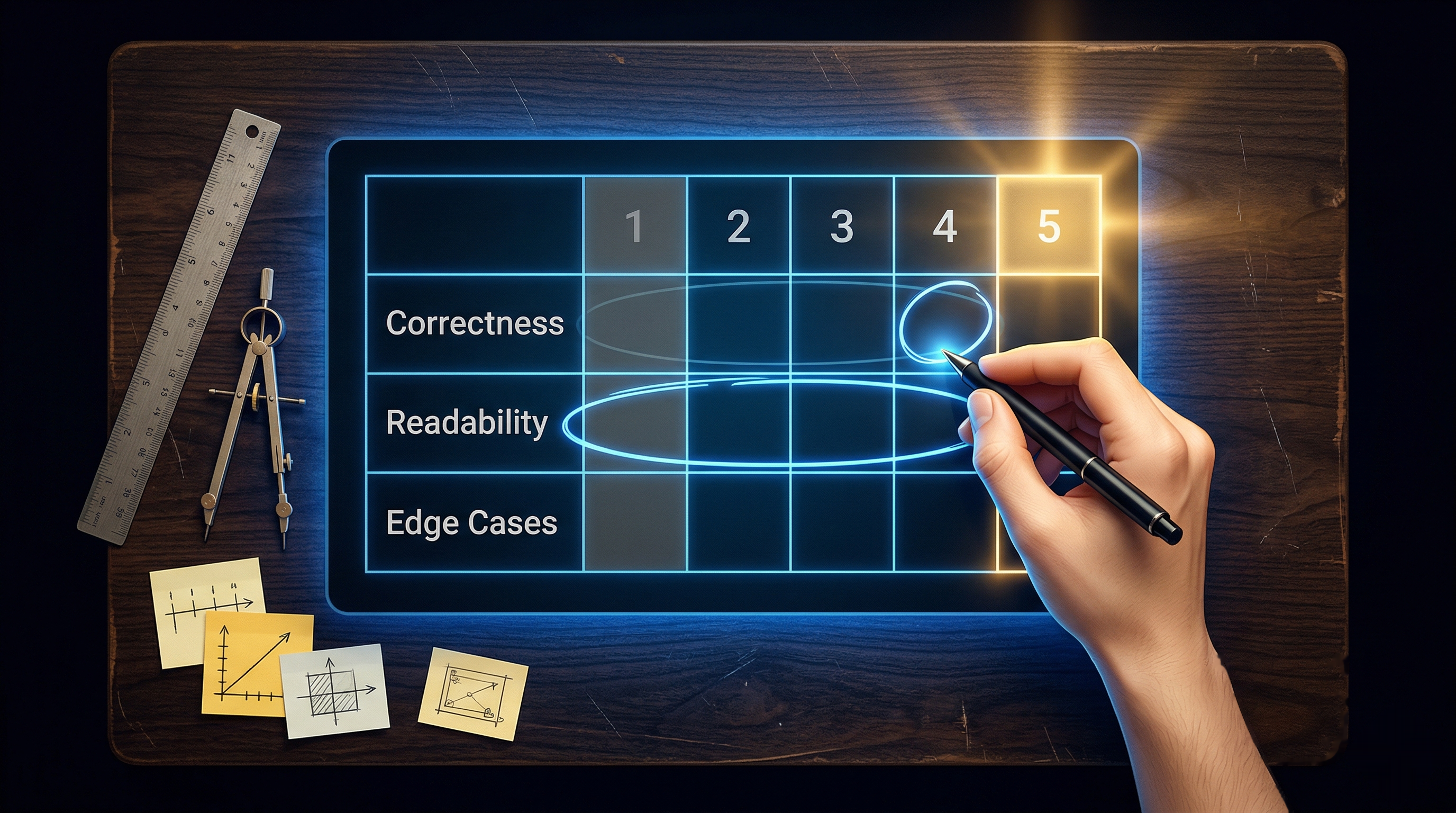

What Makes a Good RLHF Rubric for Coding Tasks

A rubric sounds simple: a list of criteria, a scoring scale, and some instructions. But most rubrics for AI code evaluation are quietly broken in ways that poison the training data. Here is what good rubric design actually looks like.